LLM integration is transforming how businesses operate by bringing powerful large language models (LLMs) into everyday workflows. These models, such as GPT, are designed to understand and generate human-like text, making them incredibly valuable for automating tasks like customer support, data analysis, and content creation. In simple terms, LLM integration involves embedding these models into enterprise systems to enhance productivity and efficiency. The importance of LLMs in modern enterprises can’t be overstated, as they empower businesses to make data-driven decisions, improve customer experiences, and streamline operations, giving them a competitive edge in today’s fast-paced market.

LLM Integration: Key Concepts

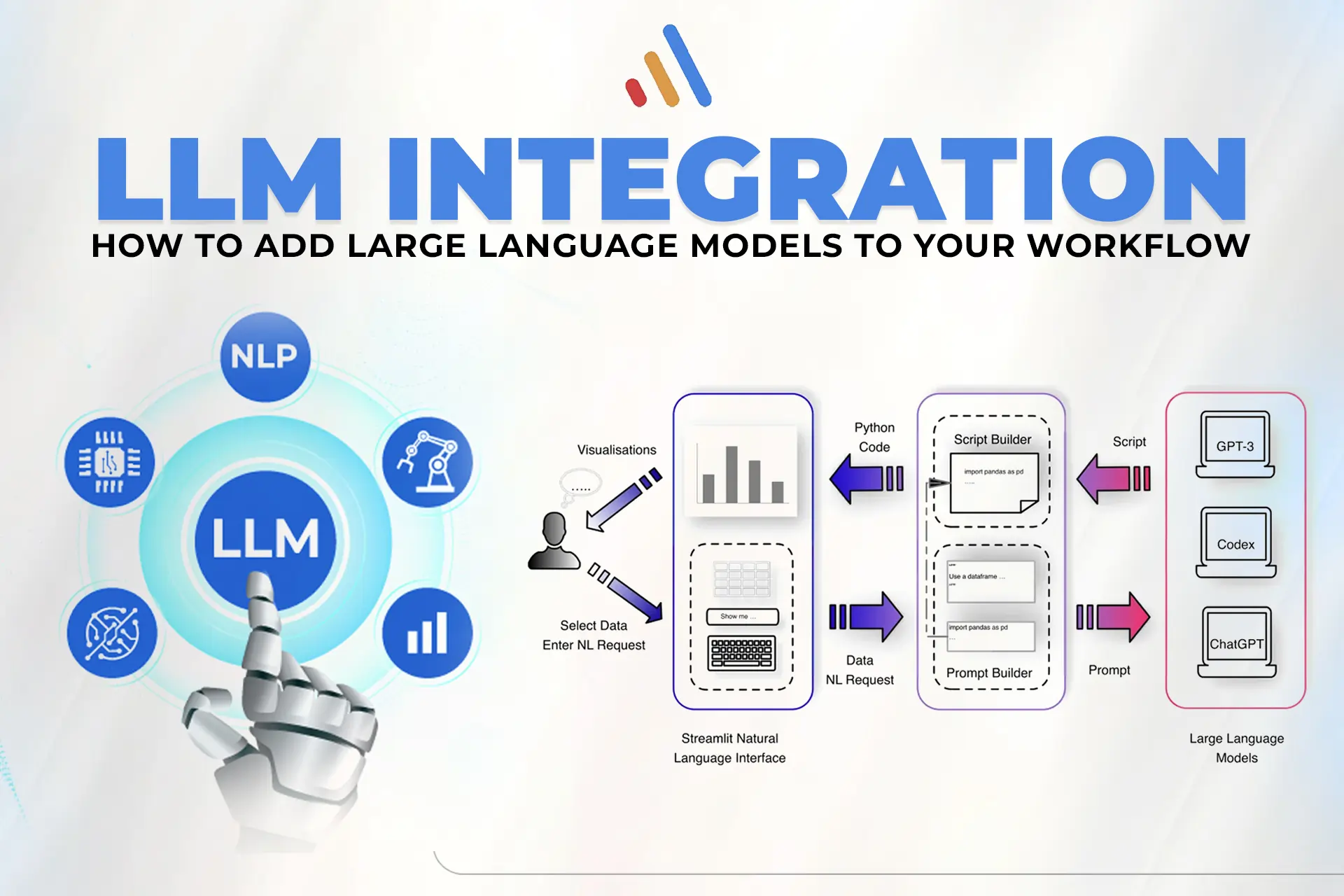

LLMs (Large Language Models) are a type of machine learning model designed to understand, generate, and manipulate human-like text based on the data they have been trained on. These models, such as GPT, are integrated into enterprise applications through APIs that allow them to process and generate natural language in real-time. Whether you are automating customer support or creating a more intuitive user interface for your business, LLM integration powers systems to understand and respond in ways that mimic human interaction.

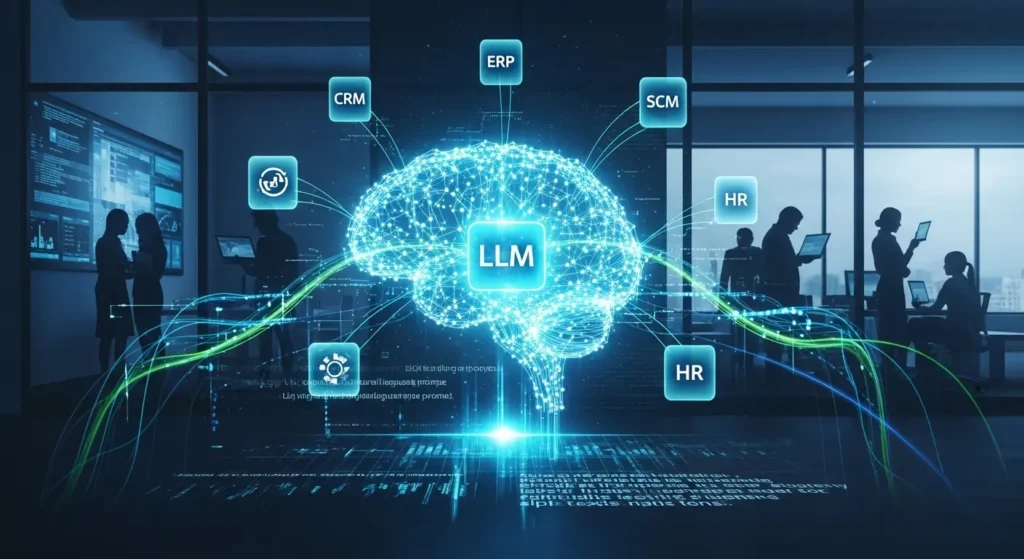

The power of LLMs lies in their ability to integrate seamlessly with enterprise workflows. With the right API, businesses can input a wide range of data — from customer queries to operational data — and receive intelligent, context-aware responses that enhance decision-making and user interaction. These integrations are transforming enterprise operations, optimizing workflows across sales, support, marketing, and data management.

Types of LLM Integration

When considering LLM integration into business systems, there are primarily two approaches: API-based integration and SDK-based integration.

- API-Based Integration:

- APIs are typically cloud-based and allow businesses to integrate LLMs into existing systems without needing extensive infrastructure changes. Common examples include integrating with customer service platforms or using the model to generate content.

- This approach is scalable and flexible, with cloud-native deployments often recommended for businesses seeking easy, cost-effective solutions.

- SDK-Based Integration:

- SDK (Software Development Kit) provides developers with the tools to embed LLMs directly into their applications, enabling more customized solutions. This is useful for businesses with specialized needs, such as incorporating predictive analytics or advanced data processing.

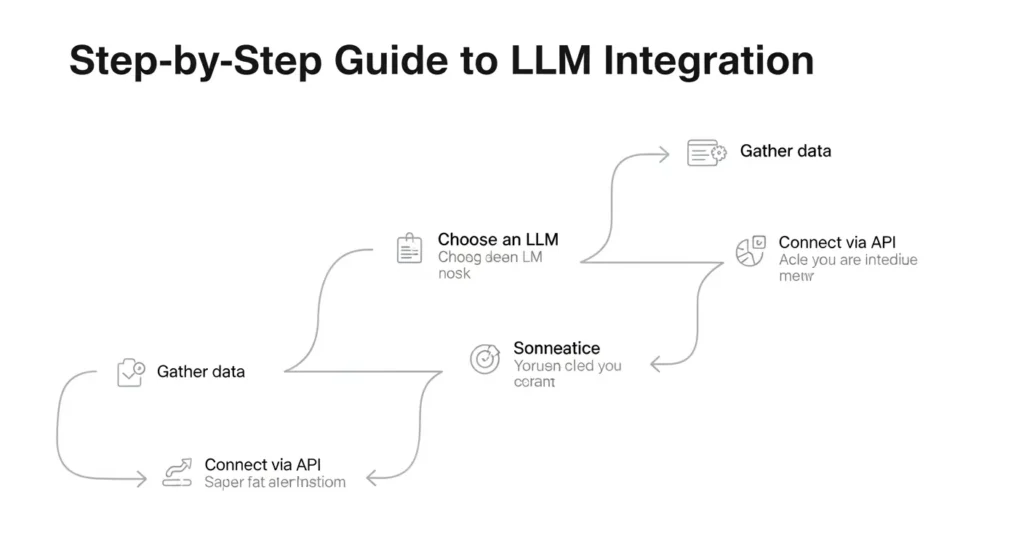

Step-by-Step Guide to LLM Integration

Step 1: Understand Business Requirements

The first step in successful LLM integration is defining the problem your business is solving. Are you looking to automate customer support, improve content creation, or enhance decision-making? Identifying the LLM use case is essential to guide the integration process.

For example, in an e-commerce business, LLMs can improve customer experience by providing automated responses for inquiries, helping with product recommendations, and analyzing customer feedback. On the other hand, marketing teams might use LLMs to generate personalized email campaigns based on customer insights and preferences.

Step 2: Data Preparation and Preprocessing

The quality of data you feed into the model is crucial for its performance. Before integrating an LLM into your business systems, ensure that your data is well-structured, clean, and free from biases. Preprocessing data often involves:

- Cleaning the data: Removing noise, duplicates, and irrelevant information.

- Categorizing and labeling: Organizing data into categories that make it easier for the model to process.

- Normalizing inputs: Standardizing formats and values for consistency.

Effective data preparation ensures that the LLM performs optimally, making it crucial for enterprise applications where high-quality output is necessary for success.

Step 3: Choosing the Right Integration Tools

Selecting the right tools for LLM integration is essential to ensure smooth deployment and scalability. Some of the most popular integration tools include:

- LangChain for streamlining LLM integration.

- Hugging Face API for using pre-trained LLMs across various applications.

- Google AI and AWS Sagemaker for robust cloud deployment.

Each tool has its strengths, depending on the enterprise’s needs. For instance, LangChain is great for businesses focused on creating multi-step workflows, while Hugging Face offers one of the largest collections of pre-trained models.

Step 4: Building Middleware for Communication Between Systems

Middleware is the glue that connects your LLM with existing business systems. It handles:

- API calls: Ensuring smooth communication between the business application and the LLM.

- Business logic: Processing the responses from the LLM and interpreting them in the context of the business needs.

Middleware helps ensure that the LLM can act as a centralized language processor, delivering responses and data in real-time without compromising performance or accuracy.

Step 5: Testing and Validation

Before going live with your LLM integration, it is crucial to conduct comprehensive testing. This includes:

- Functional testing to ensure the LLM works as intended.

- User acceptance testing (UAT) to confirm the LLM meets the business needs.

- A/B testing of different integration methods or responses.

By measuring key performance indicators (KPIs), such as response time, accuracy of outputs, and user satisfaction, you can refine your integration strategy.

Step 6: Deployment and Ongoing Monitoring

Once the LLM integration is complete, the next step is deployment. However, integrating the model into production is only the beginning. Ongoing monitoring and fine-tuning are essential to ensure the LLM continues to perform effectively.

Regular updates, monitoring model outputs for any hallucinations (incorrect information), and continuously retraining the model with fresh data are necessary to maintain the LLM’s accuracy and relevance.

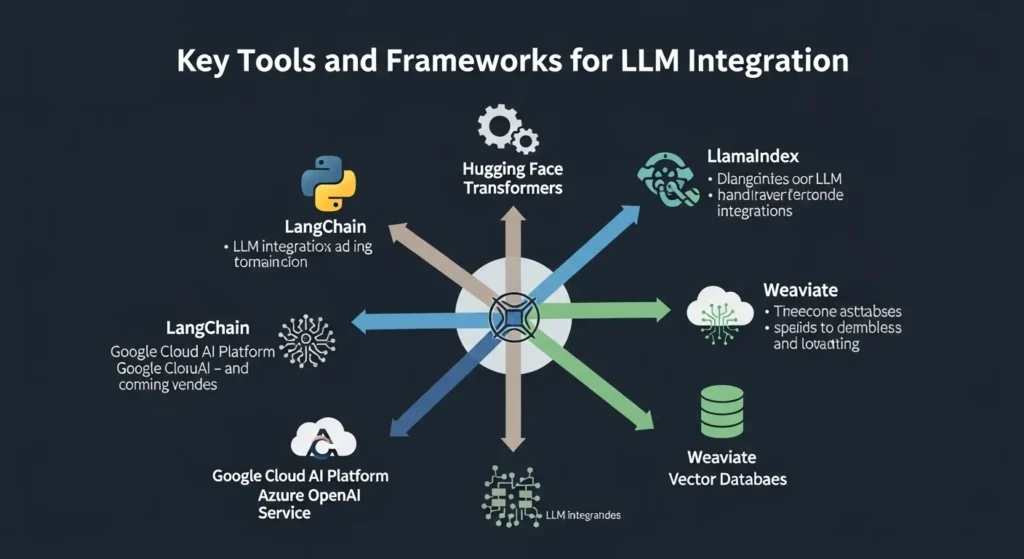

Key Tools and Frameworks for LLM Integration

AI and NLP Tools

Several tools and frameworks are available to assist with LLM integration:

- TensorFlow and PyTorch: Two of the most popular deep learning frameworks for training and deploying LLMs.

- Hugging Face: A library offering a wide range of pre-trained models.

- OpenAI API: Allows businesses to leverage powerful LLMs like GPT for a variety of tasks.

These tools offer the flexibility to train, test, and deploy LLMs in multiple environments, ensuring that businesses can scale their LLM-powered applications as their needs grow.

Benefits of LLM Integration in Business

Automation and Efficiency

Integrating LLMs enables businesses to automate repetitive tasks that would otherwise require human intervention. Whether it’s automated customer service, content generation, or data analysis, LLMs help businesses save time and resources.

Enhancing Customer Experience

LLM-powered chatbots and virtual assistants provide faster, more accurate, and personalized interactions for customers. By integrating an LLM, businesses can offer 24/7 support and address a wide range of customer inquiries with ease.

Improving Data Analysis and Decision-Making

LLMs can analyze large amounts of data in real-time, providing businesses with actionable insights and improving decision-making. By interpreting unstructured data, such as customer reviews or feedback, LLMs can help businesses identify trends, customer sentiment, and areas for improvement.

Challenges and Limitations of LLM Integration

Data Privacy and Security

One of the key challenges when integrating LLMs into business systems is ensuring data privacy and security. As LLMs process sensitive business data, it’s critical to adhere to data protection regulations such as GDPR or CCPA. Businesses must implement robust security measures to safeguard customer and organizational data.

To address these concerns, it’s important to:

- Encrypt data both in transit and at rest.

- Regularly audit and monitor AI systems for compliance with privacy regulations.

- Use data anonymization techniques where applicable to mitigate the risk of exposing sensitive information.

With the right data security protocols, businesses can confidently leverage LLMs without compromising privacy or facing regulatory challenges.

Dealing with Inaccurate Outputs (Hallucinations)

LLMs, like GPT models, are capable of generating human-like text, but they are not infallible. Sometimes, these models can produce inaccurate or irrelevant outputs, a phenomenon known as hallucination. This can be problematic for businesses, especially in high-stakes applications like legal advice or healthcare data.

To mitigate this, businesses can:

- Implement real-time monitoring to catch and correct erroneous outputs.

- Introduce human oversight in critical areas, ensuring that LLM outputs are double-checked before being used for decision-making.

- Use hybrid models, where the LLM is paired with rule-based systems to help filter out invalid information.

By addressing the risks associated with hallucination, businesses can increase the reliability of their LLM-powered systems.

Cost and Infrastructure

Integrating LLMs into business workflows requires a solid technical infrastructure, which can involve significant costs. These expenses come from:

- Cloud infrastructure for scalable deployment.

- Fees for API calls to access LLM services.

- Investment in training models and hardware resources for on-premise solutions.

While the benefits of LLM integration outweigh the costs in many cases, businesses must be mindful of these expenses and plan their budgets accordingly. For companies just starting, using cloud-based LLM APIs can be a cost-effective solution before investing in more extensive infrastructure.

Future of LLM Integration in Enterprise Systems

Innovations in LLM Technology

The field of LLMs is rapidly evolving, with new advancements making them more powerful and versatile. Upcoming innovations include:

- Multimodal models that process both text and visual data, opening up new opportunities for industries like e-commerce (e.g., visual search engines powered by LLMs).

- Custom-trained LLMs tailored to specific business needs, which can enhance the accuracy of predictions and recommendations.

- GPT-4 and future models that offer even better performance, such as understanding complex instructions and producing more contextually relevant outputs.

These developments will make LLM integration even more valuable for businesses, improving everything from customer interactions to predictive analytics.

LLMs in Future Business Strategies

As LLMs become more refined, their role in business strategy will expand:

- Predictive analytics powered by LLMs will allow companies to make data-driven decisions more efficiently, potentially transforming sales strategies, inventory management, and marketing campaigns.

- Personalized customer experiences will be the norm, as LLMs can analyze consumer behavior and tailor interactions to individual preferences.

- LLMs will increasingly be used in AI-driven business models, where they power autonomous operations that improve efficiency and reduce operational costs.

Looking ahead, LLM integration will become a cornerstone of business transformation, providing organizations with the tools they need to thrive in an increasingly data-driven world.

Missing Insights: Additional Headings for Optimization

Cross-Industry Use Cases for LLM Integration

While much of the current focus is on enterprise workflows, the potential of LLM integration spans across various industries. For example:

- Healthcare: LLMs can automate medical record analysis, generate personalized health recommendations, and assist in diagnosis by processing large datasets.

- Retail: LLMs can improve inventory management, customer service, and sales forecasting.

- Finance: LLMs help with fraud detection, risk assessment, and real-time financial forecasting.

By exploring industry-specific use cases, businesses can see the full potential of LLM integration.

Best Practices for Managing Multiple LLM Integrations in Large Enterprises

For large organizations with multiple LLM-powered applications, management practices are essential. Companies need to:

- Maintain a centralized governance framework for LLMs to ensure uniformity across departments.

- Implement version control for LLM models, ensuring that all teams are using the most up-to-date versions.

- Ensure interdepartmental collaboration to avoid silos and make the most of cross-functional insights.

Effective LLM management ensures that businesses are maximizing the value of each integration while minimizing operational risks.

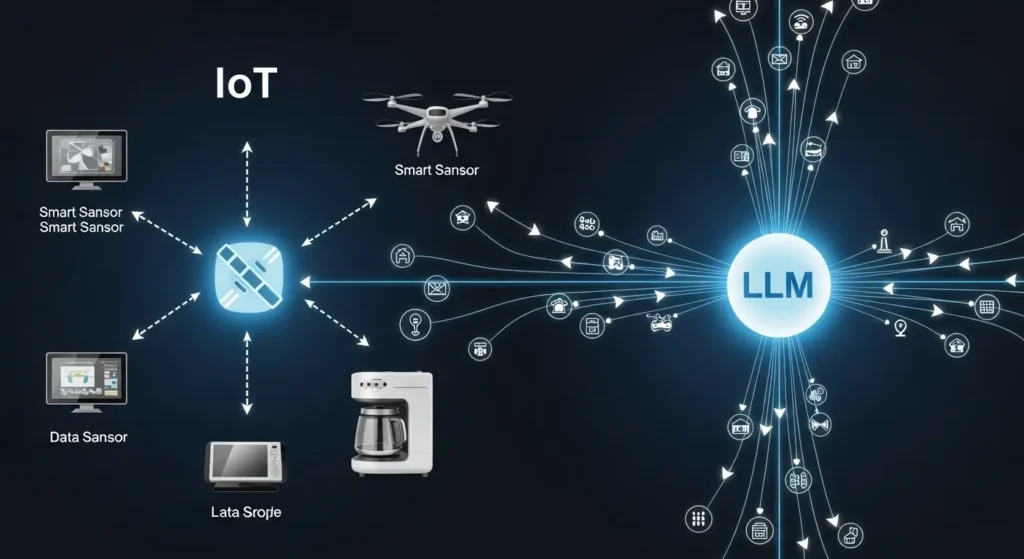

Integrating LLMs with Emerging Technologies (IoT, Blockchain)

The integration of LLMs with emerging technologies such as IoT and blockchain opens up new frontiers:

- IoT and LLMs: LLMs can be integrated with IoT sensors to analyze real-time data from connected devices, offering predictive insights for industries like manufacturing, agriculture, and logistics.

- LLM and Blockchain: LLMs can process blockchain data and generate reports on transactions, contracts, and compliance.

These integrations will unlock advanced use cases, making LLM technology even more impactful in next-gen business solutions.

Frequently Asked Question

What is LLM integration in software development?

LLM integration is the process of connecting Large Language Models (such as GPT-4, Claude, or Llama) into an existing software application via APIs or local hosting. Unlike using a standalone chatbot, integration allows the software to automatically process data, generate content, or perform reasoning tasks within its own user interface and workflows.

What are the primary benefits of integrating LLMs into business workflows?

The main benefits include significant time savings through automation, the ability to analyze vast amounts of unstructured data quickly, and enhanced user experiences through natural language interfaces. Businesses often use integrated LLMs for automated customer support, document summarization, and intelligent code generation.

How do I ensure data security during LLM integration?

Data security is a top priority when connecting AI to your infrastructure. Best practices include using enterprise-grade API versions that do not use your data for training, implementing robust encryption for data in transit, and using “Prompt Injection” filters to prevent unauthorized access to your system’s internal logic.

What is the difference between an API-based and a Self-Hosted LLM integration?

- API-Based: You connect to a model hosted by a provider (like OpenAI). It is faster to deploy and requires less hardware but involves ongoing costs per request.

- Self-Hosted: You run an open-source model (like Llama 3) on your own servers. This offers total data privacy and no per-request fees but requires significant upfront investment in GPU hardware and technical maintenance.

Does LLM integration require a complete system overhaul?

No. Most integrations are designed to be modular. You can start by adding LLM capabilities to a single feature—such as a smart search bar or an automated email drafter—using middleware and webhooks without rewriting your entire codebase.

How can I reduce the costs of LLM API usage?

To optimize costs, you can implement “Prompt Engineering” to keep queries concise, use “Caching” to store responses for frequently asked questions, or use smaller, specialized models for simple tasks while reserving larger models for complex reasoning.

Conclusion

LLM integration offers businesses a tremendous opportunity to automate workflows, enhance customer experiences, and make data-driven decisions. As businesses continue to integrate large language models into their systems, it’s important to navigate challenges like data security, costs, and accuracy of outputs. However, the future is bright for LLM-powered business strategies, with innovations in multimodal models, predictive analytics, and industry-specific applications driving continued growth.